Even during the AI winter , there continued to be major innovations. One was backpropagation, which is essential for assigning weights for neural networks. Then there was the development of the recurrent neural network (RNN). This allows for connections to move through the input and output layers.

But in the 1980s and 1990s, there also was the emergence of expert systems. A key driver was the explosive growth of PCs and minicomputers.

Expert systems were based on the concepts of Minsky’s symbolic logic, involving complex pathways. These were often developed by domain experts in particular fields like medicine, finance, and auto manufacturing.

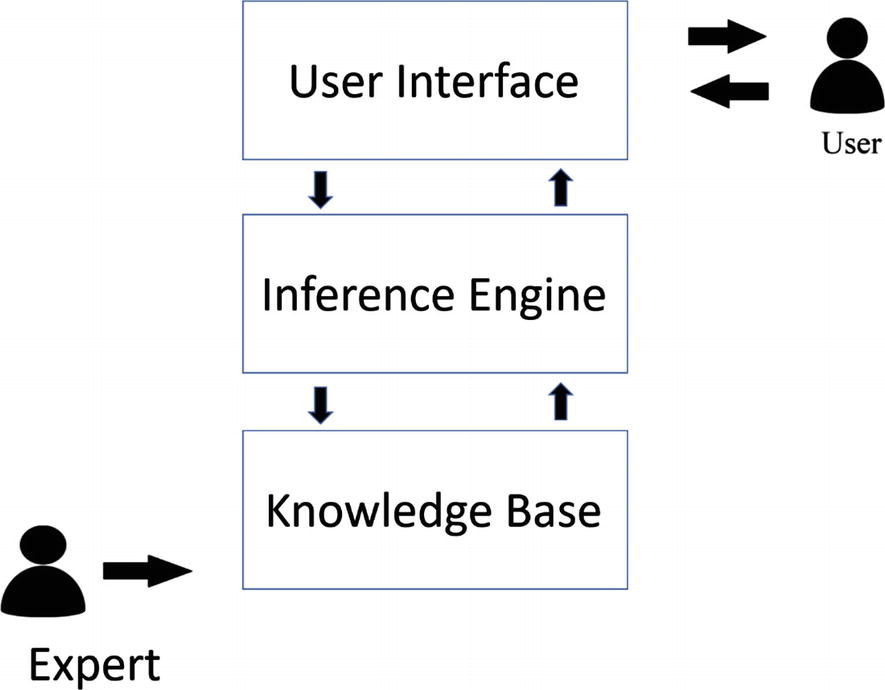

Figure 1-2 shows the key parts of an expert system.

While there are expert systems that go back to the mid-1960s, they did not gain commercial use until the 1980s. An example was XCON (eXpert CONfigurer), which John McDermott developed at Carnegie Mellon University. The system allowed for optimizing the selection of computer components and initially had about 2,500 rules. Think of it as the first recommendation engine. From the launch in 1980, it turned out to be a big cost saver for DEC for its line of VAX computers (about $40 million by 1986).

When companies saw the success of XCON, there was a boom in expert systems—turning into a billion-dollar industry. The Japanese government also saw the opportunity and invested hundreds of millions to bolster its home market. However, the results were mostly a disappointment. Much of the innovation was in the United States.

Consider that IBM used an expert system for its Deep Blue computer. In 1996, it would beat grand chess master Garry Kasparov, in one of six matches. Deep Blue, which IBM had been developing since 1985, processed 200 million positions per second.

But there were issues with expert systems. They were often narrow and difficult to apply across other categories. Furthermore, as the expert systems got larger, it became more challenging to manage them and feed data. The result was that there were more errors in the outcomes. Next, testing the systems often proved to be a complex process. Let’s face it, there were times when the experts would disagree on fundamental matters. Finally, expert systems did not learn over time. Instead, there had to be constant updates to the underlying logic models, which added greatly to the costs and complexities.

By the late 1980s, expert systems started to lose favor in the business world, and many startups merged or went bust. Actually, this helped cause another AI winter, which would last until about 1993. PCs were rapidly eating into higher-end hardware markets, which meant a steep reduction in Lisp-based machines.

Government funding for AI, such as from DARPA, also dried up. Then again, the Cold War was rapidly coming to a quiet end with the fall of the Soviet Union.

Leave a Reply