Edge computing is a distributed information technology (IT) architecture in which client data is processed at the periphery of the network, as close to the originating source as possible.

We have seen that data is the lifeline of any business as it provides valuable business insight and supports real-time control over critical business operations. Today, every business generates oceans of data, most of it coming from sensors and IoT devices operating in real time. This virtual flood of data is also changing the way computations are done. Traditional computing relies on centralized processing but bandwidth limitations, latency issues and unpredictable network disruptions poses a big challenge for hassle free movement of data and results. Edge computing offers a solution to overcome these challenges.

Edge computing moves some portion of storage and computations out of the central data centre and closer to the source of the data. This not only avoids transmission of raw data to central server but also facilitates data processing and analysis at the point where the data is actually generated—be it a retail store, a factory floor or a sprawling utility or across a smart city. Only the results of the computations that may include real-time business insights, equipment maintenance predictions or other actionable answers, is sent to the central processor for review.

Apart from efficiency benefits, edge computing also seems to be the only viable option available today. These days, the number of devices connected to the Internet, the volumes of data being produced by them is growing too fast to be handled by the traditional data centers. Gartner predicted that by 2025, 75% of the data will be created outside of centralized data centres. Movement of this big data amidst congestion and disruption is not possible.

So, IT architects have shifted focus from the central data centre to the logical edge of the infrastructure by taking storage space and computing resources from the data centre and moving them to locations where the data is generated. Therefore, they believe, ‘if you can’t get the data closer to the data centre, get the data centre closer to the data.’

A few applications that prefer edge computing include the following:

- Retail environments combine video surveillance of the showroom floor with actual sales data to analyse the trend in consumer demand.

- Predictive analytics that guide equipment maintenance and repair before actual defects or failures occur.

- Utilities like water treatment or electricity generation.

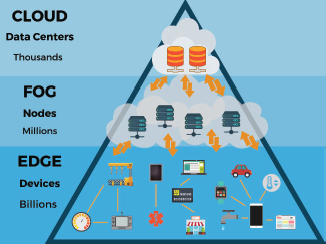

Fog computing environments produce massive amounts of sensor or IoT data generated across large physical areas that are bigger than an edge. Examples include smart buildings, smart cities or even smart utility grids. In a smart city, data can be used to track, analyse and optimize the public transit system, municipal utilities, city services and guide long-term urban planning. A single edge deployment simply isn’t enough to handle such a load, so here fog computing comes into picture.

9.4.1 Why is Edge Computing Important?

Consider the constant increase in demand of self-driving cars. These vehicles rely on intelligent traffic control signals which in turn need to produce, analyze and exchange data in real time. Multiply this requirement by huge numbers of autonomous vehicles to get a picture of how much data will be generated and processed in just one second. Clearly, this calls for a fast and responsive network and edge as well as fog computing addresses the concerns of a traditional computing system that include the following.

Bandwidth

Bandwidth refers to the amount of data that can be transmitted across a network over time. It is expressed in bits per second.

Every network has a limited bandwidth. Wireless networks have stringent limits of bandwidth as compared to wired networks. This means that only a limited data can be exchanged by a finite number of devices across the network.

However, network bandwidth can be increased to accommodate more devices and data but with a significant cost.

Latency

Latency is the time needed to transfer data from one point to another on a network. Ideally communication must take place at the speed of light, but it is often limited by large physical distances and issues like network congestion. Delays in data transfer adversely analysis and decision-making processes thereby hampering the ability for a system to respond in real time. In certain situations, latency can even cost lives. For example, in case of autonomous vehicles.

Congestion

The Internet is a global ‘network of networks’ that connects billions of computers all over the world to facilitate data exchange. Volumes of data transferred through these devices overwhelm the network with large number of data packets thereby increasing network traffic. Above this, network outages (or server/machine downtime) further add to these problems, making the Internet of Things useless.

By deploying servers and storage at a point near to the location where data is generated, edge computing (refer Fig. 9.16) can enable many devices to communicate over a much smaller and more efficient LAN that provides sufficient bandwidth making latency and congestion issues virtually nonexistent.

Edge computing can reduce network costs, avoid bandwidth constraints, reduce transmission delays, limit service failures and provide better control over the movement of sensitive data.

In edge computing, local storage collects and protects the raw data, local servers perform essential data processing and analysis to make decisions in real time and the send the results, or just essential data, to the cloud or central data centre.

9.4.2 Edge Computing Use Cases and Examples

Technically, edge computing collects, filters, processes and analyses data ‘in-place’ at or near the network edge. Being a powerful technique to address vital issues including network bandwidth and congestion, it is being used in several domains including the following:

Manufacturing

Edge computing is used by manufacturers to monitor the manufacturing process, enable real-time analytics and identify production errors to improve quality of the products. Edge computing also supports addition of environmental sensors throughout the manufacturing plant to provide insight into how each product component is assembled and stored. Manufacturing units also use this technology to make faster and more accurate business decisions regarding its operations.

Farming

Edge computing is used for indoor farming to make efficient use of sensors to track water use, nutrient density and determine optimal harvest. Data is collected and analysed to find the effects of environmental factors and improve the crop growing algorithms and ensure that crops are harvested in peak condition. Without edge computing it is very challenging to grow crops indoors without sunlight, soil or pesticides. The process reduces grow times by more than 60%. Hence, edge computing is a ray of hope to produce better results.

Network Optimization

Edge computing assists in optimizing the performance of a network by analysing data and identifying the most reliable, low-latency network path for each user’s traffic.

Workplace Safety

Edge computing can combine and analyse data from on-site cameras, employee safety devices and various other sensors to help businesses keep a check on workplace conditions or ensure that employees follow established safety protocols. This is more important when either the workplace is at a remote location or is unusually dangerous (like construction sites or oil rigs).

Improved Healthcare

The healthcare industry generates massive amount of patient data that is often collected from devices, sensors and other medical equipment. This huge chunk of data requires edge computing to automate data storage and processing. Machine learning algorithms are used to access the data and extract information that requires immediate action to help patients avoid health incidents in real time.

Transportation

Autonomous vehicles require and produce anywhere from 5 TB to 20 TB every day. Data about location, speed, vehicle condition, road conditions, traffic conditions and other vehicles needs to be stored and analysed in real time, while the vehicle is in motion. In such a scenario, autonomous vehicle becomes an ‘edge’ to facilitate authorities and businesses manage vehicle fleets based on actual conditions on the ground.

Retail

Retail businesses generate enormous data volumes from surveillance, stock tracking, sales data and other real-time business details. In such a situation, edge computing helps to analyse this diverse data and identify business opportunities like an effective endcap or campaign, predict sales and optimize vendor ordering. Since retail businesses vary from one location to another, edge computing is preferred for processing the data locally at each store.

Immersive Experience

Real-time applications, such as AR/VR, cannot tolerate more than a few milliseconds of latency and can be extremely sensitive to jitter, or latency variation. In many applications, closed-loop automation is used to maintain high availability with response times in tens of milliseconds. This stringent requirement cannot be fulfilled without edge computing infrastructure.

Edge computing expands bandwidth capabilities thereby supporting new immersive applications including AR/VR, 4K video, and 360° imaging for verticals like healthcare. Caching and optimizing content at the edge is already becoming a necessity since protocols like TCP does not perform well when there are sudden changes in radio network traffic.

Edge computing infrastructure when used to access real-time data through network can reduce stalls and delays in video by up to 20% during peak viewing hours.

Education

Edge computing facilitates software-based education solutions that use on-device AI for personalized virtual assistance, natural language interaction and even augmented reality experiences. For example, ViewSonic digital whiteboard experience uses edge technology to re-create the classroom experience for students and teachers engaged in distance learning.

9.4.3 Benefits of Edge Computing

Besides solving critical issues like bandwidth limitations, excess latency and network congestion, edge computing also offers following benefits to the users.

Autonomy

In cases where connectivity is unreliable or bandwidth is restricted due to location’s geography, edge computing is a viable solution. For example, oil rigs, ships at sea, remote farms, remote locations like a rainforest or desert extensively used edge computing to compute work on site, sometimes on the edge device itself. Like in a water purifier used in a remote village, that has water quality sensors installed in it, edge computing can save data in its local storage and then transmit it to a central point only when connectivity is available. By processing data locally, the amount of data to be sent is reduced significantly, thereby demanding less bandwidth or connectivity time than was required otherwise.

Data Sovereignty

Transmitting large volumes of data not only adds to technical woes but also poses a great threat to its security, privacy and other legal issues, especially when data crosses national and international boundaries. Edge computing keeps data close to its source and within the bounds of prevailing data sovereignty laws which defines how data should be stored, processed and exposed. Processing raw data locally secures sensitive data before it is exchanged with the cloud or primary data center (which may be in other jurisdictions).

Edge Security

Edge computing ensures data security. Since data and results obtained from analysing the data has to travel to reach the cloud or data center, steps are taken to protect the data through encryption. Edge deployment is often hardened against hackers and other malicious activities.

Moreover, edge computing can be thought of as similar to data centre computing due to the following reasons:

- It includes compute, storage and networking resources.

- Its resources may be shared amongst many users across several applications.

- It can be benefitted by virtualization and abstraction of the resource pool.

- It supports the ability to leverage commodity hardware.

- It uses APIs to support interoperability.

9.4.4 Challenges of Edge Computing

Although edge computing provides a range of benefits across a multitude of use cases, the technology is far from foolproof. There are certain issues that can affect the adoption of edge computing at a large scale. These include the following.

Limited Capability

Edge computing deploys a variety of resources to provide a range of services. But this deployment of infrastructure at the edge must be clearly defined and justified to be effective. This is because even an extensive edge computing deployment serves a specific purpose at a pre-determined scale.

Connectivity

Edge computing still needs to transfer data to the cloud and hence even its capability is somewhat restricted by network connectivity.

Security

IoT devices are notoriously insecure, so edge computing deployment needs to take extra care for proper device management, policy-driven configuration enforcement and enforcement of security techniques in the computing and storage resources. Software patches and updates along with encryption ensure secure communications.

Data Lifecycles

Today, a lot of data is generated and collected by IoT devices. But most of this data is not required after a short span of time. So, it may not be stored for a long term. Therefore, it is necessary that the business has the capability to decide which data to keep and what to discard once the analyses process is over. The business also has the responsibility to retain and protect the required data in accordance with business and regulatory policies.

Greater Demands from Local Hardware

Edge computing requires more powerful and robust local hardware. For example, in a simple IoT camera a built-in computer is used to send its raw video data to a web server. But with edge computing, a more powerful computer with more processing power is required to run its own motion-detection algorithms.

Difficult to Scale

Scaling out edge servers to many small sites is more complicated than adding the equivalent capacity to a single data centre. Moreover, increase in physical locations also adds overhead to manage them.

Limited Support

Edge computing sites are usually remote with limited or non-availability of on-site technical expertise. If something fails on site, there must be an infrastructure in place that can be fixed easily by non-technical local labour. Further issues can be addressed centrally by a small number of experts located elsewhere.

Issues in Site Management

It takes more time, money, resources and efforts to manage all sites that are a part of edge computing. It is more challenging when software is implemented differently at each site.

More Prone to Attacks

Physical security of edge sites is often much lower than that of the central site. So, there is a greater risk of malicious or accidental attacks.

9.4.5 Edge Computing Implementation

Edge computing seems to be a straightforward idea but implementing it is a challenging exercise.

The first important step of deploying any technology successfully is the creation of a meaningful business and technical edge strategy which considers the need for edge computing. The ‘why’ answer demands a clear understanding of the technical and business problems that the organization needs to solve—overcome network constraints, maintain data sovereignty and so on.

An edge data center requires careful upfront planning and migration strategies.

The edge strategy starts with what the edge means, where it exists for the business and how it should benefit the organization. It should align with existing business plans and technology roadmaps.

As the project moves closer to implementation, evaluate hardware and software options carefully. Select a vendor by going through each vendor’s offering and evaluate it based on cost, performance, features, interoperability and support. The software tool should provide comprehensive visibility and control over the remote edge environment. Some of the vendors in edge computing are Adlink Technology, Cisco, Amazon, Dell EMC and HPE.

The actual deployment of an edge computing varies dramatically in scope and scale. No two edge deployments are the same. Because of these variations, edge strategy and planning become critical to the success of an edge project.

An edge deployment demands comprehensive monitoring. Deploying IT staff for this may not be possible, so edge deployments should be architected to provide tools that satisfy the following:

- Ensure resilience, fault-tolerance and self-healing capabilities

- Can be deployed remotely

- Easy to configure

- Offers comprehensive alerting and reporting about site availability or uptime, network performance, storage capacity and utilization and compute resources

- Maintains security of the installation and its data. Tools that emphasize vulnerability management and intrusion detection and prevention should be installed.

9.4.6 Edge Computing, IoT and 5G Possibilities

Edge computing is evolving with new technologies and edge services are expected to become available worldwide by 2028. Today, edge computing is situation-specific, but it is expected to become more ubiquitous and support much more use cases in the future.

More number of devices and software (compute, storage and network) will be developed for edge computing.

Multivendor partnerships will provide better product interoperability and flexibility at the edge. For example, AWS and Verizon will together bring better connectivity to the edge.

Wireless communication technologies, such as 5G and Wi-Fi 6, will also boost edge deployments and utilization in the coming years. This will set the way for better and enhanced virtualization and automation capabilities, better vehicle autonomy and workload migrations to the edge, while making wireless networks more flexible and cost-effective.

The future will focus on the development of micro modular data centres (MMDCs). The MMDC is basically a data centre in a box, putting a complete data centre within a small mobile system that can be deployed closer to data—across a city or a region, to bring computing much closer to data.

Moreover, while AI algorithms that require large amounts of processing power are run on cloud-based services, AI chipsets that can do the work at the edge will help computations to be handled by edge systems.

Network function virtualization (NFV) is at the heart of edge computing application as it provides infrastructure functionality. Telecom operators are now transforming their service delivery models by running virtual network functions as part of, or layered on top of, an edge computing infrastructure.

Leave a Reply