We would like to develop a measure for the rather abstract concept of

information, so that efficiencies of different protocols or physical systems of

communication can be compared, and more efficient systems designed.

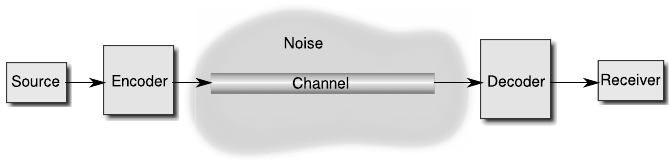

We need to first have a model for the process we are describing, and Shan-

non’s proposed model has stuck (Figure 11.1). We start with a source of

information, which, like a talking person or a buzzing telephone receiver, gen-

erates messages using some predefined language consisting of symbols we

will call the alphabet. The alphabet could, for example, be the set of English

211

212 Introduction to Quantum Physics and Information Processing

FIGURE 11.1: Simplified model for communication

letters for communicating through a written message, or a set of “dit”s and

“da”s for a message in Morse code, or 0’s and 1’s for a computerized message.

Any message emitted by the source is then encoded for transmission using

the physical system involved. For a talking person, the message is encoded in

the vibration of the air molecules. For a telephonic message, the encoding is

in terms of analog electric pulses in the wire. For the now-obsolete telegraph,

encoding was done in binary dit’s and da’s represented as short or long electric

pulses which we have now refined into the 0s and 1s of the modern binary

computer. The encoded message is then transmitted across a channel. This is

obvious as the air carrying the spoken message, the wire carrying the telegraph

signal, or the optical cable transmitting long distance digital messages. In the

context of quantum information, the channel may well just be the environment

that the quantum system finds itself in between computational steps.

The importance of the channel in information theory lies in how much

it costs in terms of its usage, and how it may distort or reduce the quality

of the message being sent. We would need to quantify the compression of

the message in order to more efficiently utilize the resources, as well as the

capacity of the channel to carry information in the presence of noise. Noise

could be literal in the case of a talking person, random electrical signals in

a telephone or telegraph wire or the computer circuitry, or the change in the

state of a quantum system interacting inadvertently with its environment. At

the other end of the communication model is the decoder and finally the

receiver of the message, which is either the ear of the audience, the ear-piece

of the telephone, the printed output of the telegraph, or the monitor of the

computer.

We will now see how we can develop a measure for the information carried

by a message, or a symbol in the message. The appearance of a symbol at the

receiver’s end is an event, whose probability can be predicted if we have some

idea of the properties of the source. We will talk in the language of events and

their probabilities of occurrence.

Characterization of Quantum Information 213

11.1.1 Classical picture: Shannon entropy

According to Shannon, information associated with events is related to

their probability of occurrence. Let’s take a simple example. Suppose a magi-

cian has hidden a ball in one of three boxes labeled 1, 2, and 3. Now he asks

you to choose the box in which is ball is hidden. How would you choose? It de-

pends on the information you have about the ball’s location. In the beginning,

you do not know which box it is in, so you think it is equally probable to be

in any of the three boxes. Thus each box carries 1/3 of the information about

which box the ball is in. You’d alternatively say that the ball is in each box

with equal probability. The situation is thus described by an initial probability

distribution P

in

:

P

in

(ball is in 1) = 1/3, P

in

(ball is in 2) = 1/3, P

in

(ball is in 3) = 1/3.

An event such as opening any one box now will give you further information

on where the ball is among the three. Suppose you open one box, say box 2, and

the ball is not in it. The probability distribution has now changed: conditioned

by the event of having opened box 2:

P

2

(ball is in 1) = 1/2, P

2

(ball is in 2) = 0, P

2

(ball is in 3) = 1/2.

Opening a box now gives information only about where the ball is in the two.

On the other hand, what about the information with the magician for the

same situation? He knows that he has put the ball in box1, so his distribution

is

P

m

(ball is in 1) = 1, P

m

(ball is in 2) = 0, P

m

(ball is in 3) = 0.

In this case opening a box adds no information at all to the magician’s knowl-

edge!

This example teaches us a few things:

The information carried by an event is related inversely to its probability of

occurrence before it has occurred. (After the event, the probability is of course

1!) The more probable the occurrence, the less information the event carries.

The occurrence of an event removes doubts about the possibilities before it

occurs. The information it carries is thus the doubt it removes by occurring.

The information carried by an event is changed if a previous event carries

related information. If an event has happened, it carries no new information

as compared to an event that has not yet happened.

Let’s now try to quantify the information I carried by an event E. If we

are talking about messages encoded in symbols sent across a channel, then

the event is the reading of the symbol by the receiver. The occurrence of a

particular symbol x is the event E(x) with probability p(x),

P

x

p(x) = 1. Now

the mathematical formulation of information is concerned with the syntactic

form of the message rather than the semantic. This means that we are not

going to worry about the meaning conveyed by a message in a literal sense

214 Introduction to Quantum Physics and Information Processing

(which would depend on how the read symbol is translated by the receiver’s

brain), but rather by the form of the message itself, in terms of the symbols

it carries. In other words, information carried by a symbol does not depend

on which symbol it is, but only on our ignorance, or uncertainty, about its

occurrence, before it is read. Thus the information carried by the symbol x

on the occurrence of event E(x), must depend inversely on p(x).

There are some properties we expect the information function to have.

Suppose two events E

1

and E

2

both occur. The information carried by this

joint occurrence is I(E

1

+ E

2

). If the result of one event E

1

is revealed, then

the information carried by the other event must be the difference: I(E

2

) =

I(E

1

+E

2

)−I(E

1

). Thus, the information carried by the joint event is the sum

of the information carried by each. So if many events occur sequentially, then

the information also builds up in the same order.

Remember that if an event is certain to occur, then it carries no informa-

tion. A certainty is represented by probability 1, so that I(1) = 0. (That’s

like a computer that is switched off, so it reveals no information!)

Collecting all these properties together, we require our mathematical in-

formation I(E

i

) to be a function that satisfies

1. inverse relation to probability: I(E

i

) ∼

1

p

i

,

2. monotonously increasing, continuous function of p

i

,

3. additivity: I(E

1

+ E

2

) = I(E

1

) + I(E

2

),

4. identity corresponding to information of certainty, I(1) = 0.

One function that satisfies all these properties is the logarithm. This led Shan-

non to define the self-information of an event E

i

as

I(E

i

) =

1

log p

i

= −log p

i

. (11.1)

Here, the base of the logarithm denotes the units in which information is

measured. If we use the natural logarithm then the unit of information is

nats. If all events are binary in nature (yes-no answers), then the logarithm is

taken to the base 2 and the units of information is bits. Note that the difference

between different units for information is a multiplicative constant since

log

b

x = log

b

a log

a

x.

Example 11.1.1. Let’s calculate the information in bits carried by the drawing

of a card out of a playing deck of 64 cards. To rephrase the problem, suppose a

magician asks you to pull out a card at random, and then tries to guess which

card you drew. The logical way for the magician to remove his ignorance

Characterization of Quantum Information 215

about the card would be to ask you questions about the card. (Of course

he cannot ask you which card it is!) Since we want the answer in bits, the

answers to these questions must be binary: “yes” or “no.” The problem then

translates to how many such binary-answer questions the magician must ask

for correctly guessing the number. The procedure he adopts is the “binary

search” algorithm of dividing the range of possible answers into two at each

step and asking if the card is in one of the two ranges. Your yes or no will allow

him to select one of the sections and further divide it. Consider the following

sample scenario:

Q 1. Is it between 1 and 32? Ans: No.

Q 2. … between 33 and 49? Ans: Yes.

Q 3. … 33 and 41? Ans: No.

.

.

.

Q n.

What is the total number n of such questions? It’s the number of times the

range [1 − 64] can be bifurcated, which, if you follow through the above se-

quence of questions, will be 6.

n = 6 = log

2

64 = −log

2

1

64

,

and 1/64 is the probability of choosing one particular card out of the deck.

Clearly this answer is independent of which card it is (the semantic meaning

of the event).

A message is the occurrence of a string of events: the appearance of each

symbol constituting the message. Thus the total information carried by a

message is the weighted average of all the symbols in the message. This is

given by

H(m) =

X

i

p

i

I(E

i

) = −

X

i

p

i

log p

i

. (11.2)

What if a particular symbol doesn’t occur in the message? In that case too,

p = 0. Even though log p is then undefined, the symbol cannot contribute

to the information carried by the message, and for our purposes, we define

0 log 0 ≡ 0.

From its similarity to the thermodynamic quantity of the same name, the

information function H(m) has been called the entropy of the message. In sta-

tistical thermodynamics, we seek to relate the macroscopic properties system

to the microstates of its constituents. Notably, the energy of a gas is related

to the momenta of its constituent molecules. If the number of microstates

Leave a Reply