We know that ethics define the discipline that deals with moral obligations and duties of humans.

Correspondingly, ethics of AI deals with ethics specific to robots and other artificially intelligent beings. These ethics can be divided into two groups.

- Roboethics: Roboethics consider moral behaviour of humans as they design, construct, use and treat artificially intelligent beings.

- Machine ethics: These ethics manage the moral behaviour of artificial moral agents (AMAs) that play an important role in delivering artificial general intelligence (AGIs) within existing legal and social frameworks.

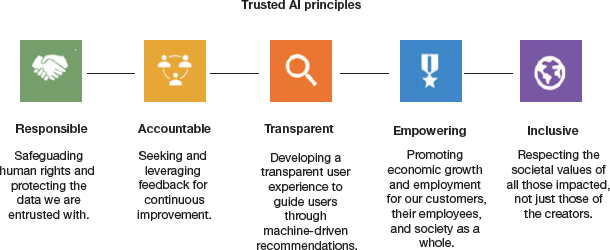

FIGURE 8.1 Trusted AI principles

The bigger concerns are as follows:

- If AI generates human-like output, can it also make human-like decisions?

- If AI makes human-like decisions, then are these decisions human-like?

- If AI takes decisions of sanctioning or rejecting a bank loan, is that decision justified?

- If AI decides whether a student should be enrolled in a college or not, is AI taking a fair decision?

- If AI makes a human-like decision, is it human-like trustworthy?

AI is basically data + mathematical model + training based on data + predictions. What if the data provided for the training is unintentionally wrong/biased?

Issues like these are endless. Hence, getting a good understanding of them is critical to the success of this technology. Refer Fig. 8.1 to learn about some guiding principles of a trusted AI Tool. As a citizen of the AI society, we must be aware about how AI works and the framework of AI ethics.

8.1.1 Ethical Use of Artificial Intelligence

AI systems no doubt offer a wide range of functionality for businesses, but their use also raises ethical questions.

An ideal AI system must not be biased. This is especially true when AI uses inherently unexplainable deep learning and generative adversarial network (GAN) algorithms.

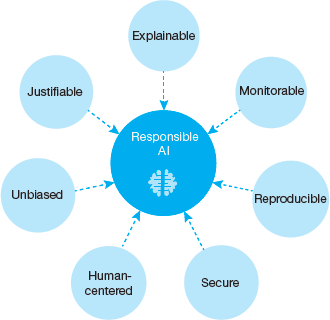

FIGURE 8.2 Features of Ethical AI System

The ability to explain is a potential stumbling block to using AI systems in industries that operate under strict regulatory compliance requirements. For example, financial institutions must explain their credit-issuing decisions. The main problem with such systems is that it will be difficult to explain how the decision was taken to refuse a credit as such decisions are made by teasing out subtle correlations between thousands of variables. In such a scenario, the AI program that is not able to explain decisions is known as black box AI.

A responsible AI system is explainable, monitorable, reproducible, secure, human-centered, unbiased and justifiable (refer Fig. 8.2).

Formulating laws to regulate AI is not at all easy as AI uses multiple technologies. Any strict law can hamper its progress and development. The AI technology is changing so rapidly that new technology breakthroughs sometimes make existing laws obsolete. For example, existing laws regulating the privacy of conversations and recorded conversations do not cover the challenge posed by voice assistants like Amazon’s Alexa and Apple’s Siri. These digital assistants collect data not to distribute them but to improve its machine learning algorithms. This is a golden chance for criminals having malicious intent.

8.1.2 Is AI Dangerous? Will Robots Take Over the World?

Rapid growth and increase in the capabilities of AI systems have made us wondering about the risks involved in using AI. Some of us think that AI will quickly takeover tasks performed by humans. But most researchers believe that super-intelligent AI is unlikely to exhibit human emotions, so there is no point thinking that AI will become malevolent. AI can become a risk only in two conditions.

AI system is specifically programmed to do something devastating. For example, autonomous weapons are AI systems that are programmed to kill. If acquired by scrupulous people having ill intentions, such weapons could inadvertently lead to an AI war, and mass casualties, potentially even the end of humanity.

Autonomous weapons are designed to be extremely difficult to ‘turn off’, and once they become operational, humans could themselves rapidly lose control over them. This risk is also present with narrow AI when given autonomy.

Just imagine what would happen if an autonomous drone with facial recognition as well as a 3D-printed rifle, pistol or other gun becomes available. Or if a self-driving car, connected to the Internet is hacked to get into some serious accident.

Even in hospitals, more and more equipment are now connected to the Internet. If any or all of these are hacked, then can you imagine what the hacker can do with the patient’s body?

AI could be programmed to do something beneficial, but the method used to achieve its goal can be highly destructive. This is especially true when the AI programmer asks the machine to complete a task, but does not clearly outline the goals. For example, you can instruct a self-driven car to program to take you to a particular destination as soon as possible. However, the instruction ‘as soon as possible’ fails to address safety, road rules, etc. The smart car may successfully complete its task, but after creating a great havoc. So, there must be some provision to continuously monitor and control the machine, its goals and process being performed to achieve that goal.

Of 9 million low-skilled services and BPO roles, 30 per cent or around 3 million will be lost by 2022, principally driven by the impact of robot process automation or RPA.

As of now, there is no liability for actions on machines. There is no clarity on what legal aspects bind machines when they become increasingly smart. The following questions may arise:

- Do we judge AI systems the same way as we judge a human?

- Who is responsible if the AI system becomes self-learning and autonomous to a greater extent?

- Is there any error margin for AI machines, even if it has fatal consequences?

Apart from these two serious concerns, AI also poses some additional threats/risks that calls for attention. These threats are discussed below.

Credit: PaO_STUDIO / Shutterstock

FIGURE 8.3 Effect of AI on jobs

- The immediate risk posed by job automation: As AI robots become smarter and more dexterous, they will quickly replace humans. For example, you must have heard of restaurants having robots as waiters. Just imagine, where the less educated people will go if machines start taking their jobs (as seen in Fig. 8.3)?It is often rightly feared that the deployment of AI systems for job automation will eventually replace certain types of jobs, especially those that are predictable and repetitive. According to a 2019 Brookings Institution study, 36 million people work in jobs that they may soon lose owing to automation as at least 70 percent of their tasks (varying from retail sales, market analysis to hospitality and warehouse labour) will be done using AI. In fact, a newer Brookings report even states that white collar jobs may be worst affected. As per McKinsey & Company report (2018), the African American workforce will be hardest hit by automation.

- Biased algorithms: We know that computers work on GIGO concept which says Garbage-In-Garbage-Out. This is a very serious limitation in AI applications. If we feed our algorithms data sets that contain biased data, then the output generated from such systems will only produce biased results. This biased result, if applied to solve a real-world problem, may lead to an even bigger problem. A dynamic advertising billboard in Utrecht was switched off because the spy software installed on these billboards aroused public outrage.

- Too little privacy: When using IoT or AI systems, a lot of data is generated. It is said that approximately 2.5 quintillion bytes (or 2.5 million terabytes) of data is added each day. It is interesting to note that 90% of the digital data has been created in the last two years. A lot of data is required for the proper functioning of the smart systems. As a result, much data about us is collected thereby eroding our privacy. Once the systems collect our data, we have no means to know what data about us is used by whom and for what purpose.These days, cameras can easily be fitted with facial recognition software to capture details about us (including our gender, age, ethnicity, gesture and state of mind). Do you know that in China, some police officers wear glasses with facial recognition technology having a database with facial pictures of thousands of ‘suspects’ (judged on the basis of certain behaviour)?

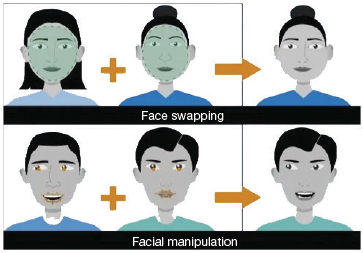

FIGURE 8.4 AI Tools can be used for Face Swapping and Face Manipulation

FIGURE 8.4 AI Tools can be used for Face Swapping and Face Manipulation - Everything becomes unreliable: These days, fake news and filter bubbles are not uncommon. Smart systems can create faces, compose texts, produce tweets, manipulate images, clone voices and engage in smart advertising.

An AI system can be used to depict day into night or create highly realistic faces of people who have never existed.

For example, open-source software Deepfake can easily stick pictures of faces on moving video footage. Due to such fake videos, celebrities are the worst affected. People with malicious intentions easily create pornographic videos starring them. Even normal citizens are blackmailed with morphed images and/or videos. This is often known as Faceswap video blackmailing (refer Fig. 8.4).

Even in politics, fake or manipulated videos are produced at a high speed to influence the opinions of the masses.

Did you know that the company Cambridge Analytica, was found to manage access to data from 87 million Facebook profiles of Americans to campaign for President Trump so that he could come to power?

8.1.3 Ethics in AI

The current world is quickly getting transformed by artificial intelligence and those who use this technology are living a richer and easy-going life. However, this is possible only when we talk about ethical AI, not just AI.

The term, ethical AI, is composed of two words—ethical and AI. We are now already familiar with the term AI. Ethical means one that is according to ethics. And ethics relates to moral principles that govern the behaviour and actions of a group or an individual. So, ethical AI is the theory behind developing computer systems that can perform tasks that require human intelligence. It focusses on how right, how fair and how just is the AI’s output, outcome and impact.

Example: We know that AI can produce biased results if it is trained with biased data or if it does not do anything to avoid bias. So, any AI system that works on biased data is actually not ethical (or unethical). For example, Microsoft released a chatbot called Tay on Twitter in 2016, to learn by engaging people in dialogue through tweets or direct messages. However, trolls made Tay learn all negative words that spread hatred for women, a particular race and all sorts of biased data within hours. Tay was also taught to give repulsive and toxic responses. This aroused the anger of Twitter users and finally Microsoft had to silence Tay forever in even less than 24 hours.

An ethical AI system designed to solve a particular problem, uses unbiased data and is trained using the right learning model for the problem and is monitored to evaluate if the results produced by it is right and fair.

8.1.4 AI and Bias

Think about the following statements.

- Most images that show up when you do an image search for ‘doctor’ are white men.

- Images and videos of a ‘doctor’ show usually shows a male character and that of a ‘nurse’ is a woman.

- All virtual assistants like Alexa, Siri, Google assistant, etc. work with female voice.

- Computer vision systems frequently give an error while recognizing people of different colour.

Can you think of more such cases where we witness biasness towards a particular gender, colour, caste, religion, country, etc? Technically, AI bias occurs when an algorithm produces results that are systematically prejudiced towards a particular group of people and thus produces skewed output. AI applications may have built-in biases because ultimately, they are created by humans who have conscious or unconscious preferences that may go undiscovered until the algorithms are used at a massive scale. Let us understand three basic sources of bias in AI systems.

Data

Any AI system is just as good as the data we put into them. Naturally, adding biased or skewed data can never give fair results. Machines do not have the intelligence to differentiate between right or wrong training data.

For example, many-a-time, you must have noticed that Google or any other voice assistant replies in American accent and at times gives wrong results when we give a command in Indian accent. This happens because the AI system is trained on recorded speech from white, upper-middle class Americans, making it difficult for the technology to understand commands from people not belonging to this category.

Algorithm

Algorithm can further amplify the biases injected by skewed data. For example, if you Google Image Search ‘Teacher’, then the image classifier algorithm trained on the images available in public domain shows more women teaching as opposed to male teachers. AI algorithms must be designed to maximize accuracy. But in this case, the AI algorithm may decide that all people teaching are women, despite the fact the training data has some images of men in the classroom. So, this is a case of gender bias in the AI system that becomes a part of AI algorithm due biasness in data.

People

People who are developing the AI system, that is, engineers, scientists, developers, etc. are the next big source of bias. Humans design AI systems to give the most accurate results with the available data. So, data feeders that may be humans may again hit the success unknowingly. Therefore, it is rightly said that ethics and bias are not the problem of the machine but that of the humans behind the machine.

8.1.5 Towards Ethical and Trustworthy AI

Today, several companies worldwide are using algorithm-based ‘emotional AI’ hiring platforms to augment and lower the financial burden of their recruitment processes. While these systems may hire in a fair and unbiased manner, there are cases reporting women applicants being disproportionately rejected based on years of biased data in a male-dominated sector.

Let us now see few ways in which world is trying to deal with this situation.

Regulating a More Ethical AI

In April 2021, the European Commission launched its first ever legal framework on AI to guarantee the safety and fundamental rights of people and businesses, while strengthening AI uptake, investment and innovation across the EU.

The new risk-based approach will not only set strict requirements for AI systems but also immediately ban AI systems which are considered to ‘be a threat to the safety, livelihood and rights of people’ including ‘systems that manipulate human behaviour, circumvent users’ free will and allow social scoring by governments’.

Later, in June 2021, Australia launched a similar AI ethics framework to guide businesses, governments and other organizations to responsibly design, develop and use AI.

Company and Organizational Engagement

Besides necessary regulation and standards, these days, organizations are building trust in AI technologies through cultural and educational programmes, risk assessments and third-party audit programmes. This would help them to move towards a prevention, detection and response framework (like anti-corruption, prevention of tax evasion frameworks).

Rights and Activist Groups

AI systems are yet to go a long way. In this journey, the relationship between people and technology will be redefined continuously. To ensure that our societies move forward on the path of ethics, it is critical that AI systems are human-centric. Civil rights and activist groups need to challenge the discourse and amplify the voices of those who are most adversely affected by new technologies.

We must all ask the question—Is the technology necessary or is there any alternative? Only if the benefits outweigh the harm, we must check form whom the AI systems work.

Ensuring Data Privacy

Companies are now taking measures that are important to keep data safe and protect it from potential misuse. Privacy protection is now becoming an integral part of any ethical AI system. Data ethics and privacy in such systems is ensured by the following:

- Encrypting the data.

- Ensuring that the AI algorithm cannot learn from outside its dataset.

- Using secure computation so that even the people who developed or work on those AI systems are not able to see or access the data.

- Restrict reverse engineering the AI model so that no user can access data used for model training.

Diversify Your Team

Since bias is an important issue and results from an AI tool will be used by people from different racial, gender and economic identities, we need to make sure that there should be no bias. For this, build diverse teams to reduce the potential risk of bias falling through the cracks. A diverse team has data scientists, business leaders, professionals with different educational backgrounds and experiences, such as lawyers, accountants, sociologists and ethicists. This would help the company to get their views on any sort of bias so that it could be mitigated in a timely manner. The team should continually analyse data and algorithms ensure fairness. There are many tools like Bias Analyzer which automate this process and also show the costs and benefits associated with a variety of possible mitigation actions.

8.1.6 Why is Ethical AI Important?

Since AI is used in areas like medicine, law enforcement, recruiting, data privacy, military defense or self-driving vehicles, it makes it mandatory that the AI system must produce accurate, transparent and understandable results that is in synchronization with the ethical standards and norms of our society. To understand the need of ethical AI systems, we must first understand that a biased or incorrect output from AI system that assist in law enforcement, job recruitment, defense work, self-driving vehicles can result in eroding people’s privacy (by misusing data in unintended ways), and taking decisions that are impossible for people to understand and to access liability for damages when harm is caused.

Apart from being unbiased and accurate (as shown in Fig. 8.5), the credibility of AI systems also depends how we choose to develop and use it. We can just hope that AI will be used for purposes that benefits mankind. But there is nothing that can stop a person from creating systems that can be disastrous for humanity.

In October 2019, researchers found that an algorithm used in US hospitals to predict which patients may require extra medical care heavily favoured white patients over blacks. This bias creeped in because of the fact that white patients paid more bills and availed extra medical facilities for the same medical problem as compared to black.

Amazon’s hiring algorithm also suffered from bias related issues. The algorithm was found to be biased against women. This was probably due to the fact that the system saw a greater number of men applicants than women.

FIGURE 8.5 An ethical system must be unbiased and accurate

Nonetheless, efforts are being made by numerous agencies, committees, coalitions and expert groups to ensure that we are on the right track to make AI systems do more to heal than harm. For example, the IEEE Global Initiative on Ethics of Autonomous and Intelligent Systems that put out Ethically Aligned Design, a publication from 2016 outlines the guiding standards for developing and administering ethical AI solutions. Therefore, ethical AI aims to ensure is that AI is doing good for the planet.

Going further on this, the European Commission High-Level Expert Group on AI identified seven guiding principles for creating Trustworthy AI. These guidelines are as follows:

- AI systems should support human autonomy and decision-making.

- AI systems should be technically robust and safe to use. They must have a fallback plan that can be used when something goes wrong.

- AI systems should ensure data privacy by protecting user’s data, and providing adequate mechanisms to maintain the quality and integrity of the data.

- AI systems should not work on biased data. The system should be clear, explicable and transparent about its underlying data and the models.

- AI system should be trained with diverse, non-discriminatory and fair data and models to avoid unfair bias.

- AI systems should be designed to benefit everyone, now and in future. They must focus on societal and environmental wellbeing to be sustainable and environmentally friendly.

- An AI system should have the accountability to ensure accurate, unbiased outcomes with utmost responsibility.

- An AI system must give due importance to data privacy and data security. A system that provides design confidentiality, transparency and security into their AI programs must ensure that data is collected, used, managed and stored safely and responsibly.

- An AI system must be accountable for its decisions. There must be someone in the organization who will be held responsible for any problematic situation.

Have you ever wondered how much information Google has about you? In an Android phone, Google has your entire Contact List. Google knows the names and numbers of people you talk to. Google knows your location. It knows where you go every day and on holidays. Google knows what email you write to whom. Google has your photographs (Google Photos), knows details about your friends (Google Hangouts). All this is done in lieu of free services it provides. But imagine what big risk it poses to our privacy.

8.1.7 Impact of AI on Jobs

Today, AI is on the tip of everyone’s tongue and one of the most in-demand areas of expertise for job seekers. The World Economic Forum has predicted that AI and ML will displace 75 million jobs but would also create 133 million new ones in a few months.

Some of our favourite sci-fi movies have shown that the advent of this technology has created a fear that AI will one day make human beings obsolete in the workforce. As the technology is advancing, many tasks that were once executed by humans have now been automated.

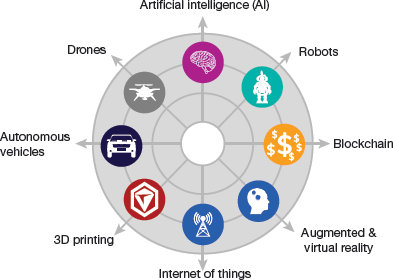

FIGURE 8.6 Emerging Areas in IT

A two-year study from McKinsey Global Institute suggests that by 2030, intelligent agents and robots could replace as much as 30% of the world’s current human labour. That is, automation will displace between 400 and 800 million jobs and require approximately 375 million people to switch job categories entirely.

However, job losses due to AI is a fear far from reality. It will just introduce a paradigm shift, similar to the one which occurred after the Industrial Revolution. When the Industrial Revolution took place, many feared that people will lose jobs to machines. But now we see that revolution has generated lot more opportunities and jobs. Similarly, with AI, many professions will become obsolete and disappear, but other new emerging occupations in areas listed in Fig. 8.6 will become popular. Learning AI skills will help you gain a significant career advantage.

Jobs which will grow with the help of AI include the following.

Creative jobs will get refined and advanced by use of AI. These jobs are usually performed by professionals like artists, doctors and scientists. AI will not only enhance efficiency but also make these jobs less complex for humans.

AI will probably not make human workers obsolete, at least not for a long time.

Management jobs cannot be replaced by artificial managers because anytime, human managers will have to control artificial managers. Managing is a very complex task that involves deep understanding of emotions, profession, ethics, people and communication. Human managers can use certain tools to become more effective at their jobs.

Tech jobs including programmers, data scientists, data analysts, big data engineers and all those who work on the creation and maintenance of AI systems will always be in high demand. However, a few tech jobs which are in demand today may become less common, while others may become more vital.

A few job titles which can be expected to appear by 2030 include Chief Bias Officer, Data detective, Man–Machine teaming manager, AI business development manager, AI assisted medical professional, AI tutor, to name a few.

In many sectors of business, AI has grown by 270% over the last four years. Rather than promoting the obsolescence of humans, AI will continue to drive massive innovation that will fuel many existing industries and could have the potential to create many new sectors for growth, ultimately leading to the creation of more jobs.

We must understand that although AI has done a lot to replicate the efficacy of human intelligence in executing certain tasks, AI programs suffer from major limitations. They can only solve one problem at a time. These systems become rigid, and are unable to think outside their prescribed programming. In contrast, humans have generalized intelligence which helps them solve any kind of problem, think abstractly to make critical judgement across all sectors.

Moreover, AI can learn only with massive amounts of relevant data that is available at the right time. Many a time, this data is protected under issues of privacy and security. In addition, data may have bias. And it is quite obvious that biased data will never give accurate results.

AI Is becoming the standard in all businesses, not just in the world of tech

Machines may also suffer because of availability of limited computation and processing power. For example, the cost of electricity required to power one supercharged language model AI was estimated around $4.6 million.

So, AI has the potential to ultimately create more jobs, not less. The question is not ‘humans or computers’ but ‘humans and computers’ involved in complex systems that advance industry and prosperity. Nobody would want to be behind the curve when it comes to AI. In fact, 90% of leading businesses have already invested in AI technologies. More than half of businesses that have implemented some manner of AI-driven technology report experiencing greater productivity.

AI and machine learning top the skills required by companies these days and jobs in this area are expected to increase by 71% in the next five years. AI is likely to have a strong impact on certain sectors in particular. These are discussed below.

- Medical: The medical industry has huge data which can be utilized to create predictive models related to healthcare and by physicians for diagnosis. And at a higher level, AI and automation will also help to eliminate disease and world poverty.

- Automotive: Autonomous vehicles, autonomous navigation and manufacturing within the automotive sector have all been possible with AI technology.

- Cybersecurity: During the pandemic, cyber frauds rose by 600% as hackers capitalized on people working from home, on less secure technological systems and Wi-Fi networks. AI and machine learning provide powerful tools to identify and predict threats. AI is also playing a vital role in the field of finance as it can process large amounts of data to predict and catch instances of fraud.

- E-commerce: On e-commerce websites (like Amazon), AI is used to design chatbots, recommend products, use image-based targeted advertising, and assist in warehouse and inventory automation.

- HR applications: An HR department gets thousands of applications every day. AI tools can be used to reject 75% of resumes that do not fit the current context. Prior to AI, HR managers had to devote considerable time to filter applications to select suitable candidates. Data from LinkedIn shows that recruiters spend up to 23 hours looking over resumes for one successful hire.

- Legal profession: Support services with document handling -classification, discovery, summarization, comparison, knowledge extraction, and management use AI for efficiency and accuracy.

Thus, the AI revolution will lead to a new era of prosperity, creativeness and well-being. Humans will no longer be asked to perform routine, limited-value, full-time jobs. Rather, jobs will be flexible and offer selective premium services. There will be more jobs related to programming, robotics, engineering, etc. And, blue-collar and white-collar jobs will be eliminated.

Leave a Reply