Neural Network (NN) or Artificial Neural Network (ANN) is a machine learning algorithm that is inspired by the biological neuron system and learns by examples. It consists of a large number of highly interconnected processing elements called neurons to solve problems. The algorithm follows a non-linear path and information is processed in parallel throughout the nodes. The best part of neural network is that it can change its internal structure by adjusting weights of inputs.

Neural network algorithms were developed to solve problems which are easy for humans but difficult for machines. These problems include pattern recognition in which we need to identify similar pictures or patterns. Because of its ability to do pattern recognition, neural networks application ranges from optical character recognition to object detection.

2.7.1 Working of Neural Networks

Do you in human’s brain, dendrites of a neuron receive input signals from another neuron and gives output based on those inputs to an axon of some other neuron. The human brain comprises billions of interconnected web of neurons that process information makes the brain to think and take decisions.

Similarly, the neural network algorithm works using a set of connected input/output units. In this structure, each connection has a weight associated with it. In the learning phase, the network learns by adjusting the weights to predict the correct class label of the given inputs.

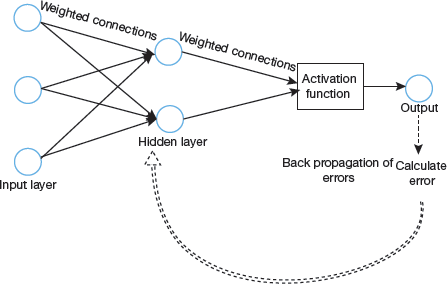

The learning phase is used along with back propagation error method. Therefore, when error is calculated at the output unit, it is back propagated to all the units because error at each unit contributes to total error at the output unit. The errors at each unit are then used to optimize the weight at each connection.

The output of a neuron may vary from −inf to +inf. So, we need a mapping function also known as the Activation function that maps inputs to the outputs.

A Simple Neural Network Model

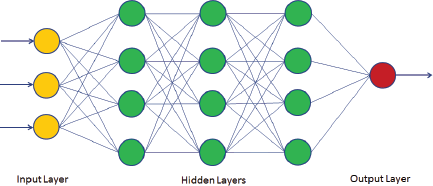

In Fig. 2.29, we can see that it is a feedback neural network in which information is passed in both directions (forward as well as backwards). The structure of the neural network can change over time based on the inputs. Though the figure shows that there is one input layer, one hidden layer and one output layer but we can have multiple layers of hidden layers as shown in Fig. 2.30.

FIGURE 2.29 Feedback neural network

FIGURE 2.30 Neural Network with multiple hidden layers

Here, the input layer is the first layer of the neural network and receives the raw input. It processes the input and passes the processed information to the hidden layers. The hidden layer passes the information to the last layer, which gives the final output.

The output of a neuron can range from −inf to +inf. The neuron doesn’t know the boundary. So, we need a mapping mechanism between the input and output of the neuron. This mechanism of mapping inputs to output is known as Activation Function.

2.7.2 Pros and Cons

Pros

- Neural network is a flexible algorithm that can be used to solve both regression and classification problems.

- Neural networks perform well on nonlinear dataset with a large number of inputs such as images.

- Neural networks can work with any number of inputs and layers.

- Neural network works very fast as compared to other classification algorithms since it performs calculations in parallel.

Cons

- Algorithms like Decision Tree and Regression that are simple, fast, easy to train, and provide better performance are seen as a preferred choice for classification and regression problems because

- Neural networks require more time for development and needs more computation power.

- Neural Networks needs more data than any other Machine Learning algorithm.

- Neural networks can be performed only on numerical inputs and non-missing value datasets.

2.7.3 Applications of Neural Networks

Neural network algorithms are extensively used in the following fields.

- Pattern recognition applications like facial recognition, object detection, fingerprint recognition, etc. uses neural network algorithms.

- Anomaly detection applications that are specifically designed to detect unusual patterns that don’t fit in the general patterns use neural networks because of their ability to perform well in pattern recognition tasks.

- Time series prediction applications like predicting stock prices, forecasting weather prefer to use neural networks.

- Natural language processing applications including text classification, Named Entity Recognition (NER), Part-of-Speech Tagging, Speech Recognition, and Spell Checking which are used in a wide range of applications makes use of neural networks algorithms.

2.7.4 How Neural Networks Work?

We have seen that neural networks take several inputs, processes it through multiple neurons from multiple hidden layers, and returns the final result using an output layer. This process is known as ‘forward propagation’.

To make a perfect model, we need to minimize the value or weight of neurons that are contributing more to the error. For this, we need to travel back to the neurons of the neural network and find where the error lies. This process is known as ‘backward propagation’. We can reduce the number of iterations to minimize the error by using the ‘gradient descent’ algorithm.

A simple strategy of creating input-output relationships is discussed below.

- Use a subset of inputs to calculate the output. Take only those inputs that satisfy a given threshold value. For example, if the threshold value is 0, then if x1 + x2 + x3 > 0, the output is 1 and 0 otherwise.

- Add weights to the inputs. Weights give importance to an input. For example, if w1=4, w2=5, and w3=6 then on assigning these weights to x1, x2 and x3, we will multiply input with their weights and compare the result with threshold value. That is, w1

x1 + w2

x1 + w2 x2 + w3

x2 + w3 x3 > threshold. As per the details provided, more importance has been given to x3 in comparison to x1 and x2.

x3 > threshold. As per the details provided, more importance has been given to x3 in comparison to x1 and x2. - Add bias: Each perceptron has a bias that indicates how flexible the perceptron is. Therefore, linear representation of input will now be, w1

x1 + w2

x1 + w2 x2 + w3

x2 + w3 x3 + 1

x3 + 1 bias.

bias.

Neuron applies non-linear transformations (activation function) to the inputs and biases.

Now, back-propagation (BP) algorithms determine the loss (or error) at the output and then propagate it back into the network. The weights are updated to minimize the error resulting from each neuron. In this process, the first step is to determine the gradient (derivatives) of each node with respect to the final output.

Note that one round of forwarding and backpropagation iteration is known as one training iteration or Epoch.

2.7.5 What is an Activation Function?

Activation function takes the sum of weighted input (w1![]() x1 + w2

x1 + w2![]() x2 + w3

x2 + w3![]() x3 + 1

x3 + 1![]() b) as an argument and returns the output of the neuron.

b) as an argument and returns the output of the neuron.

The activation function is usually used to make a non-linear transformation that allow us to fit nonlinear hypotheses or to estimate the complex functions. Some commonly used activation functions include ‘Sigmoid’, ‘Tanh’, ReLu, etc.

2.7.6 Gradient Descent

There are two variants of gradient descent algorithm- Full Batch Gradient Descent and Stochastic Gradient Descent (SGD). Both these variants update the weights of the neurons. Although the underlying technique is the same, the only difference lies in the number of training samples used to update the weights and biases.

While Full Batch Gradient Descent Algorithm uses all the training data points to update each of the weights at once, the Stochastic Gradient uses 1 or more data points but never the entire training data for updating at once.

For example, if we have a dataset of 10 data points with two weights w1 and w2, then

In a Full Batch algorithm, all 10 data points are used to calculate the change in w1 (Δw1) and change in w2 (Δw2) and update w1 and w2 accordingly.

However, with SGD algorithm, initially the first data point is used to calculate the change in w1 (Δw1) and change in w2(Δw2) and update the weights w1 and w2. Then, the second data point is used to update weights

An activation function is what makes a neural network capable of learning complex non-linear functions.

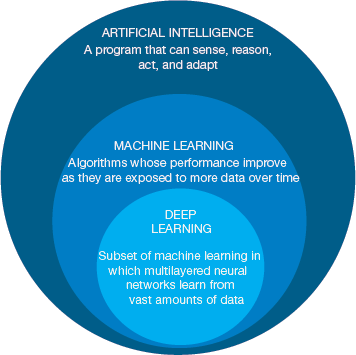

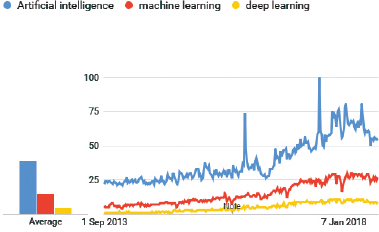

Neural Networks and Deep Learning

Majority of AI systems are supported by breakthroughs in machine learning and deep learning techniques. These techniques together go hand-in-hand to deliver an efficient system that sometimes it is just difficult to understand the difference between artificial intelligence, machine learning and deep learning. However, venture capitalist Frank Chen highlighted this difference by stating that ‘Artificial intelligence is a set of algorithms and intelligence to try to mimic human intelligence. Machine learning is one of them, and deep learning is one of those machine learning techniques.’

Deep learning is inspired from human brain and the neurons in the human brain. Therefore, to understand deep learning, we need to first recapitulate how neurons in our brans work. Have you wondered,

How a child distinguishes between a car and a bike?

How do we perform complex pattern recognition tasks?

How do we differentiate between a male voice and a female voice?

The answer is that our brains have a huge, connected and a complex network of about 10 billion neurons which are, in turn, connected to another 10,000 neurons. This network of neurons, or what we call as, a neural network, lays the foundation of deep learning. So, let us first get an introduction on neural networks.

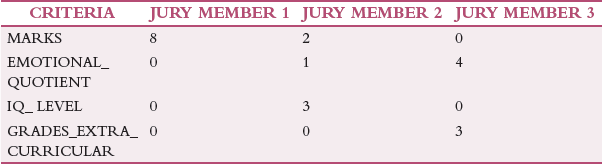

Example: A school has to select a student for the prestigious title, ‘STUDENT OF THE YEAR’. The principal forms a jury of three people for this task. The jury decides that the selection criteria would be- MARKS, EMOTIONAL_QUOTIENT, IQ_ LEVEL, GRADES_EXTRA_CURRICULAR.

The school has a history of fair selection procedure and to continue the same standard, the principal shares with the jury, data of about 10 students previously selected to give the jury an opportunity to practice, which will eventually help them make a fair selection.

Every jury member is given a maximum of 10 points (weight) on which they would rate a student. These 10 points can be distributed across the four criteria. The cut-off average to qualify the selection process is ‘6’. So, a student must have an average score of ≥ 6.

SCORE OF STUDENT 1

Jury Member 1: The first jury member gives the entire prominence to marks. Therefore, allots 8 points to Student 1 just on this criterion.

Jury Member 1: The second member distributes the points across all criteria except grades in extra-curricular activities.

Jury Member 3: The third jury member finds emotional quotient and grades in extracurricular activities more important and thus allocated points based on performance in these areas.

Finally, the average score of Student 1 is calculated as- (8 + 6 + 7 ) / 3 = 7.

Since this score is > 6, Student 1 qualifies round 1. But the principal revealed that this student actually did not get selected in round 1 as per the original decision.

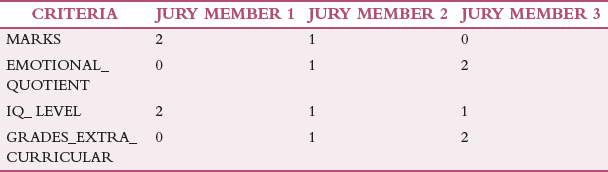

Now, the jury members were asked to adjust their scoring process to see if they can correctly predict Student 2’s selection or not.

JURY MEMBER 1: Member thinks that excess weightage has been assigned to Marks, so now considers IQ_Level also to assign points.

JURY MEMBER 2: Now distributes, points across all four criteria.

JURY MEMBER 3: Now, allots points to all criteria except MARKS.

The average sore of Student 2 is (4 + 4 + 5) / 3 = 4.3

Since this score is less than 6, Student 2 is not selected. However, this time principal reveals that decision of the jury was indeed correct. Student 2 was rejected in the original selection process.

In this way, the jury evaluates records of other candidates and in the process learns a pattern for assigning right ‘weightage’ for each criterion. Of course, the weightage that gives the highest number of correct predictions is kept for evaluating the upcoming contest.

This entire process of learning and developing an accuracy is nothing but Artificial Neural Networks (ANN).

Deep Learning

Deep learning (also known as deep neural learning or deep neural network) is an AI technique that imitates the working of human brain in processing data and creating patterns for use in decision making. It is a subset of machine learning that uses unsupervised learning to learn from unstructured or unlabelled data.

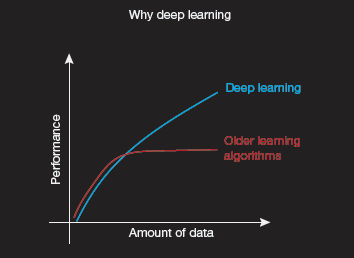

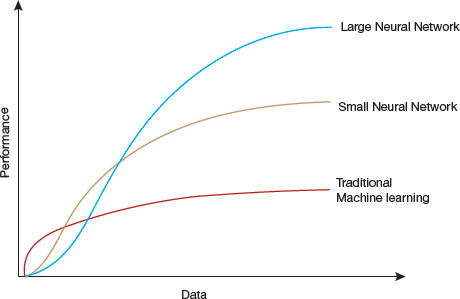

In today’s scenario we generate massive amount of data (approx..2.6 quintillion bytes) every day. This huge data paves the way for application of deep learning techniques (refer Fig. 2.31). Deep learning algorithms flourish when applied on tonnes of data processed using stronger computing power.

When applied on very diverse, unstructured and inter-connected data, deep learning can be used to solve complex problems. The deeper learning algorithms learn, the better they perform.

FIGURE 2.31 How do data science techniques scale with amount of data?

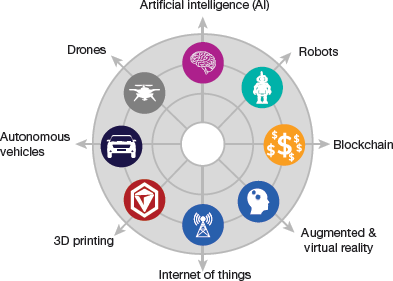

Deep learning is usually used to detect objects, recognize speech, translate languages, recognize similar images, recommend products, make important decisions, detect fraud or money laundering, and explore the possibility of reusing drugs for new ailments among other functions. It’s use became unprecedented with massive explosion of data world-wide. The big data that we collect from social media, internet search engines, e-commerce platforms, online cinemas, etc are unstructured. Such data when analysed using deep learning techniques, can unravel wealth of information and can be used with AI systems for automated support. Figure 2.32 highlights the relation between AI and DL

Deep learning is the key technology behind driverless cars. Such cars are designed to recognize a stop sign, or distinguish a pedestrian from a lamppost. Basically, deep learning model learns to perform classification directly from images, text, or sound. For better accuracy, such models are trained by using a large set of labelled data and neural network architectures containing several layers.

Deep learning uses a hierarchical level of artificial neural networks to carry out the process of machine learning.

How Does Deep Learning Work?

We have studied that neural networks are layers of nodes. It is designed in the same way the human brain is made up of neurons. Nodes within individual layers are connected to adjacent layers. While in the human brain, a single neuron receives thousands of signals from other neurons, neural networks work in the same way. A message or a signal travels between nodes and weights are assigned to every node.

A node with a higher weight will have more weightage on the next layer of nodes. The final layer computes the weighted inputs to produce the final output.

A neural network is said to be a deep neural network depending on the number of layers it has. A deep learning system needs more powerful hardware as it has more layers and perform several complex mathematical calculations on a large amount of data. Large data sets ensure accurate results. For example, a facial recognition program starts with detecting and recognizing edges and lines of faces and then gradually learns to detect other significant parts of the faces. The process continues till the overall representations of faces are identified. Over time, the program trains itself with every iteration and thus the probability of correct answers increases. Neural networks can be used to perform clustering, classification or regression. The individual layers of neural networks can be thought of as a filter that increases the likelihood of giving the correct result as output.

FIGURE 2.32 DL is a subset of ML which in turn is just a sub-domain of AI

For example, when creating a neural network that identifies images having a dog, we need to train the network with pictures showing dogs at different angles and with varying amounts of light and shadow. These images in the training data set are converted into data, which moves through different nodes in the network. Prediction, identification or classification result is finally produced by the last layer, that is, the output layer of the neural network. The output produced by the neural network is then matched with the labels provided by any human. If the two values match, the output is confirmed otherwise the neural network notes the error and adjusts the weights provided to each node.

Some popularly used open-source deep learning libraries include Google Tensorflow, Facebook open-source modules for Torch, Amazon DSSTNE on GitHub, and Microsoft CNTK.

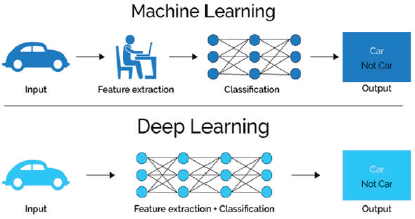

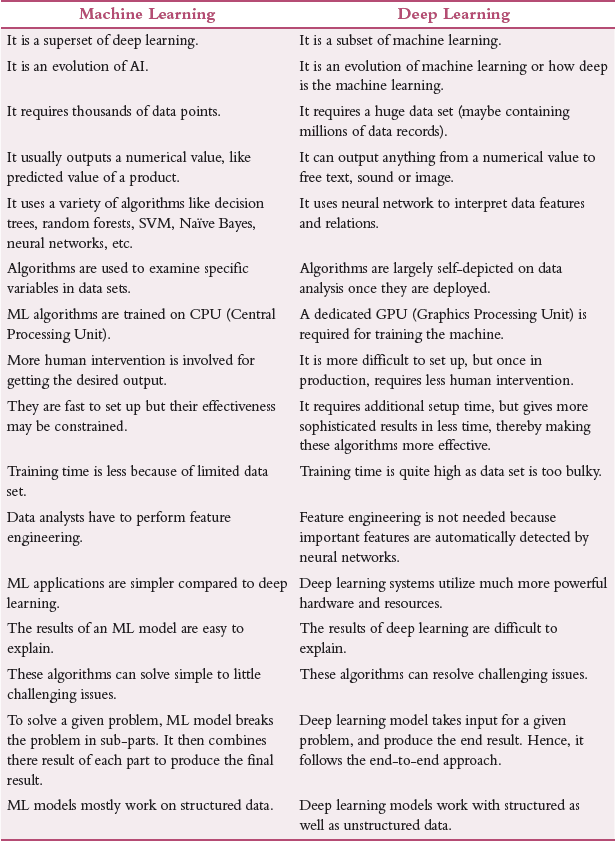

Machine Learning Vs Deep Learning

In a machine learning algorithm, relevant features are manually extracted from images and a particular classifier has to be manually implemented. The extracted features are then used to create a model that categorizes the objects in the image. In contrast, relevant features are automatically extracted from images by the deep learning model. Even the modelling steps are automatic (refer Figs 2.33 and 2.34).

FIGURE 2.33 Comparison between ML and DL

While a typical machine learning neural network model may have two to three hidden layers, deep networks on the other hand have as many as 150 hidden layers.

Moreover, deep learning performs ‘end-to-end learning’. This means that a neural network when given raw data and a task (like classification), the model automatically learns how to do this task.

Last but not the least, deep learning algorithms scale well with data. It is free from shallow learning converges problems that a typical machine learning model may face. Shallow learning refers to creation of plateau at a certain level of performance. Even after adding more data for training, the accuracy and performance of the model does not change.

While the biggest advantage of deep learning over machine learning techniques is that it frees users from the worry about trimming down the number of features used, but the main drawback is that a deep learning model is very expensive to train and needs commercial-grade GPUs. Many data scientists, treat deep learning model as a ‘black box’ because the model is very complex and extremely difficult to understand.

FIGURE 2.34 Deep Learning supports more automation using large number of hidden layers)

TABLE 2.3 Differences between machine learning and deep learning

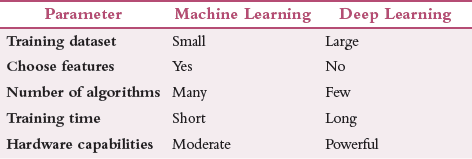

When to Use Ml or Dl?

If you have a complex problem to solve with huge amounts of data and powerful hardware capabilities, then you must go for implementing a deep learning solution. But in case any of them is missing, choose the ML model to solve your problem. Table 2.4 compares the two techniques in more detail.

TABLE 2.4 Comparison between machine learning and deep learning

Note that in case you want to automate feature extraction, especially when a data set contains hundreds and thousands of features (or attributes) then, use deep learning. Since, only few features are actually useful, a deep learning system learns about the relevance of those features to select ones that will help the system to learn useful information. Feature extraction uses PCA, T-SNE or any other dimensionality reduction algorithms.

For example, an image processing application extracts the features in the image (like the eyes, the nose, lips and so on) and feeds it to the classification model. The deep learning model has layers of neural networks. The first layer learns small details from the picture; the next layers will combine the previous knowledge to make more complex information.

Applications of Deep Learning

Deep learning techniques have already outperformed humans in some tasks like classifying objects in images. For example, deep learning uses millions of images and thousands of hours of video to develop driverless cars. For this, it requires substantial computing power. High-performance GPUs (Graphics Processing Units) having a parallel architecture when combined with cloud computing allows development teams to reduce training time for a deep learning network from weeks to hours or even less. Besides, driverless cars, deep learning is also used in the following areas.

In aerospace and defence sector, deep learning is used to identify objects from satellites that locate areas of interest, and safe or unsafe zones for the military troops.

In medical field, cancer researchers use deep learning to automatically detect cancer cells.

Industrial automation uses deep learning to improve worker safety around heavy machinery. It automatically detects when people or objects comes within an unsafe distance of machines.

In electronic gadgets, deep learning is used in automated hearing and speech translation. For example, home assistance devices that recognizes and responds to voice commands and knows user’s preferences are trained using deep learning algorithms.

Digital assistants like Siri, Cortana, Alexa, and Google Now use deep learning for natural language processing and speech recognition.

Deep learning assist in translation between languages. This can be very helpful for travellers, business people and those in government sectors. Skype and Google Translate uses deep neural networks to translate spoken conversations in real-time.

Several email systems including Gmail uses deep learning techniques to identify spam messages before they even reach the inbox.

PayPal uses deep learning to prevent fraudulent payments.

Apps like CamFind implements deep neural networks to allow its users to take a picture of any object and discover details of that object using mobile visual search technology.

Google Deepmind’s WaveNet can generate speech mimicking human voice that sounds more natural than speech systems presently on the market.

Facebook uses deep learning identify and tag friends a user uploads a new picture.

Example, How Deep Learning is Used for Fraud Detection

To detect fraud, deep learning uses time, geographic location, IP address, type of retailer, and other features. In such a scenario, the first layer of the neural network processes raw data input (like the amount of the transaction) and passes it as an output to the next layer. The second layer accepts the output of the first layer as its input. This input is then processed by including additional information like the user’s IP address and passes its result to the third layer.

The third layer may incorporate geographic location to make the results even better. This process continues across all levels of the neuron network. The final layer generates the output that may signal the analyst to freeze the user’s account. Some applications of AI that makes extensive use of Deep Learning are illustrated in Fig. 2.35.

Deep learning is used by chatbots and service bots for providing customer service. Many companies use these techniques to respond in an intelligent and helpful way to text-based questions.

Image colourization activities use deep learning techniques to transform black-and-white images into coloured ones. This work was earlier done manually. But these days, deep learning algorithms generate impressive and accurate results by using the context and objects in the images to colour them to recreate the black-and-white image in colour.

Deep learning algorithms when used in medicine and pharmaceuticals, can be used to diagnose diseases and tumour in the body. They are also used to prescribe personalized medicines created specifically for an individual’s genome.

Personalized shopping and entertainment can use deep learning techniques to suggest what users should buy or watch next.

Leave a Reply