In this section, we will read about some latest trends in AI. Extensive research is being done in the terms discussed here.

8.4.1 Collaborative Systems

These days, a lot of research is being done to develop collaborative systems in which humans and machines (with AI) can become effective partners by overcoming each other’s limitations and augmenting human abilities. There are many examples of machine-human collaboration as follows.

- The computer game Foldit uses machine-human collaboration fold simulated proteins. The folding task is performed to understand how real-world proteins involved in the causes of human disease are formed. In the game, both the player as well as AI performs operations. The AI is put to use where it excels and humans’ intuition and imagination is applied where they exceed machines.

- Two amateur chess players and an AI system worked collaboratively and won against a field of supercomputers and grandmasters.

- In 2014, Knowledge Ventures, a Japanese venture capital company, elected an AI system to its board of directors, to realize the benefits of machine-human collaboration at the highest levels of business.

- In the military, UAV drones are being used in which a machine takes the front lines with human provides virtual support.

- The Talos suit in the US military augments a front-line human with a power exoskeleton. The exoskeleton’s integrated heating cooling, vital monitoring and heads-up display (HUD) collaborate with humans much similar to that seen in the Ironman movies.

While many people believe that humans and machines can be good partners, others, however, fear that relying too much on AI will result in a massive job loss and making common man people dumb and lazy.

However, the good news is that research involving 1,500 companies says that many companies that have used AI to automate processes and displaced employees will see only short-term productivity gains. Better performance is expected only when humans and machines work together to actively enhance each other’s complementary strengths- leadership, teamwork, creativity, social skills of humans and speed, scalability and quantitative capabilities of the machines.

AI can boost our analytic and decision-making abilities and heighten creativity.

Humans Assisting Machines

In a human-machine collaborative system, humans need to perform three important roles. First, they must train machines to perform a particular task; explain the outcome of that task (especially when results are counterintuitive or controversial) and sustain the responsible use of machines so that machines do not harm mankind. For example, the European Union’s new General Data Protection Regulation (GDPR) empowers consumers with the right to get an explanation for any algorithm-based decision (like reason for acceptance/rejection of a loan). This feature will not able be a big step in delivering responsible AI but will also create about 75,000 new jobs to administer the GDPR requirements.

Last but not the least, companies also need employees who can continuously monitor to ensure that AI systems are functioning properly, safely and responsibly. For example, a self-driving car must not hit objects and any living being. Similarly, tech companies including Apple use AI to collect personal details about users to improve the user experience, but collecting too much data can compromise privacy, anger customers and even go against the law. Therefore, employees make sure that AI use data only for analysis and the privacy of individual users is not violated.

Points to remember:

Humans must also train AI systems to interact with humans in the best possible way. For example, all virtual assistants were given extensive training to develop to react as a confident, caring and helpful personality without being bossy. Such training requires inputs from a team of experts (even including a poet, a novelist and a playwright).

These days, AI assistants are also being trained to display sympathetic feelings. For example, the start-up Koko, an offshoot of the MIT Media Lab, is developing an empathetic assistant. For example, if a user had a bad day, then Koko would feel sorry for it, ask for more information and offer advice to help the person see his issues in a different light.

8.4.2 Machines Assisting Humans

Smart machines are helping humans enhance their abilities in three ways—amplify, interact and embody. AI systems boost human’s creativity, analytic and decision-making abilities by providing the right information at the right time. They can also interact with company’s employees and customers in more effective ways. Even at homes, digital assistants are assisting humans to perform their instructions.

SEB, a Swedish bank, uses Aida, a virtual assistant to interact with millions of customers and assist them in opening a bank account, making cross-border payments and interpreting their voice tone (satisfied or frustrated) to provide better service later. In 30% of the cases when the system is not able to resolve an issue, it directs the call to a human customer-service representative and then monitors that interaction to learn how to resolve similar problems in the future.

Collect information about how Autodesk’s Dreamcatcher AI enhances the imagination of even exceptional designers.

Embodying

AI systems are embodied in machines (like robots) to augment the capabilities of a human worker. Using their sophisticated sensors, motors and actuators, these machines can recognize people/objects and work safely in factories, warehouses and laboratories. Such robots also known as cobots handle repetitive actions that require heavy lifting or some dangerous tasks. In such a collaborative environment, humans are free to do other complementary tasks that require dexterity.

For example, Hyundai has extended the concept of cobot with exoskeletons.

Exoskeletons are wearable robotic devices, which adapt to an industrial worker’s location in real time to enable him to perform his job with superhuman endurance and strength. Similarly, Mercedes-Benz, uses cobot arms to customize cars as per user’s expectations according to the real-time choices consumers make at dealer’s showroom.

8.4.3 Algorithmic Game Theory and Computational Social Choice

These days, (especially enhanced due to pandemic) with increased Internet connectivity and speed, more people prefer to play games like chess, checker, poker and solitaire. These games are played using a clear set of defined rules. So, there was a need to embed algorithms in machines that allowed machines to play ethically according to pre-defined rules. This led to the onset of game theory. In a multi-agent situation (when more than one person is involved in solving a logical problem), game theory is used to choose from a set of options knowing that our choice would affect the choices of the opponent and their decision would affect our choices.

Von Newman is credited for the invention of game theory.

We can understand the concept of game theory by studying the five categories.

- Cooperative vs Non-cooperative Games: In cooperative games, participants can establish alliances to increase their chances of winning the game. Correspondingly, in a non-cooperative game, participants cannot form any alliance.

- Symmetric vs Asymmetric Games: In a symmetric game, each participant has the same goal(s). However, their strategy to win may be different. In contrast to this, in an asymmetric game, the participants have different (and at times conflicting) goals.

- Perfect vs Imperfect Information Games: In perfect information games like chess, all the players can see what moves the other player(s) are making. But, in an imperfect information game (like card game), other players’ moves are hidden.

- Simultaneous vs Sequential Games: In simultaneous games, multiple players can take actions concurrently. In a sequential game like in a board game, each player is aware of the other players’ previous actions.

- Zero-sum vs Non-zero Sum Games: In zero sum games, a player gains something that causes a loss to other players but in a non-zero sum game, multiple players get the advantages when another player gains.

When using AI techniques in game theory, two concepts are very important—Nash equilibrium and inverse game theory.

Nash Equilibrium

The Nash equilibrium is a condition in which all the players playing the game accept that there is no better solution to the game than the current situation. No player would gain anything after changing their current strategy. So, any new decision made by the players will not result in anything better.

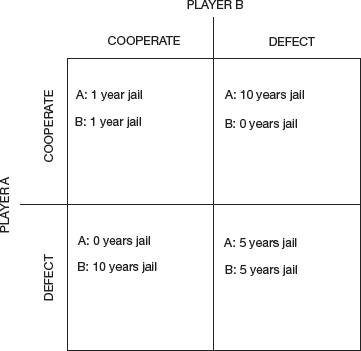

Example: A typical example of Nash equilibrium is the Prisoner’s Dilemma. If two criminals get arrested and are kept in confinement without being allowed to communicate with each other, then look at Fig. 8.21 to understand the possibilities.

- If either of the two prisoners confess that the other committed a crime, the first will be set free and the other will be sent to prison for 10 years.

- If none of them confess, then they will be sent to prison only for 1 year.

- If both confess, then both will be sent to prison for 5 years.

In this case, the Nash equilibrium is reached when both criminals betray each other.

Inverse Game Theory

Game theory is used to understand the dynamics of a game to optimize the possible outcome of its players. In contrast to this, inverse game theory designs a game based on players’ strategies and aims. It is key to designing AI agents environments.

Practical Examples

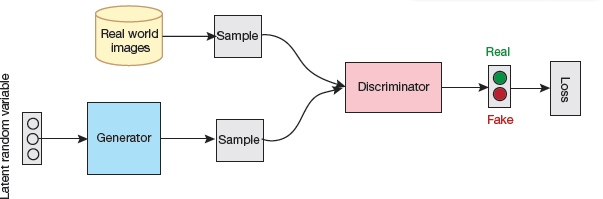

A generative adversarial network (GAN) is a machine learning (ML) model in which two neural networks compete with each other to become more accurate in their predictions.

Nash equilibrium can be attained easily in symmetric than asymmetric games. But, in real world, asymmetric games are more common.

GANs contains two models—a generative model and a discriminative model (Fig. 8.22).

FIGURE 8.22 GAN Architecture [3]

Generative models accept some features as input to examine their distributions and understand how they have been produced.

Discriminative models take the input features to predict the class to which our sample belong.

For example, in a GAN, the generative model uses input features to create new samples that resemble the main features of the original samples.

The original samples and the newly generated samples are then passed to the discriminative model to identify which samples are genuine and which are fake. A common application of GANs is to generate images (as given in Fig. 8.23) and then distinguish between real and fake ones.

Credit: doglikehorse / Shutterstock

FIGURE 8.23 Generate Images using GAN Models

Even in game playing, GANs are used extensively. The players (the two models) challenge each other. While one player creates fake samples to confuse the other, the other tries to identify the right samples. The game is played iteratively until Nash equilibrium is reached. In each iteration, the learning parameters are updated to minimize loss.

8.4.4 Multi-Agents Reinforcement Learning (MARL)

Reinforcement learning (RL) is used to make an agent (model) learn through the interaction with its environment. RL was initially developed based on Markov decision processes. This means that the agent placed in a stochastic stationary environment tries to learn a policy through a reward/punishment mechanism. The agent will finally converge to a satisfactory policy.

However, this does not hold true when multiple agents are placed in the same environment. Therefore, instead of depending only on learning through interaction between the agent and the environment, now it is also dependent on the interaction between agents.

Modelling systems with multiple agents is not a trivial task as increase in the number of agents exponentially increases the number of possible ways in which they can interact with each other. Therefore, modelling multi-agents reinforcement learning models can be done using mean field scenarios (MFS) to reduce its complexity by making the assumption a priori that all agents have similar reward functions.

Example: To improve the traffic flow in a city, AI-powered self-driving cars can perfectly interact with the external environment. But things can get complicated if these cars think as a group. In such a case, a car might get in conflict with another when both of them find it most convenient to follow a certain route.

The above situation can be easily modelled using game theory. Our cars would be different players and the Nash equilibrium would be the point between the collaboration between the different cars.

8.4.5 Neuromorphic Computing

Neuromorphic computing is a technique in which a computer (both hardware and software) is modelled based on the architecture of the human brain and its nervous system. It is a science that draws concepts from multiple disciplines including computer science, biology, mathematics, electronic engineering and physics.

The main aim of neuromorphic computing is to create artificial neural systems inspired by biological structures. Such a structure would enable the machine to behave like a human brain in the following ways.

First, to learn, retain information and even make logical decisions as the human brain makes.

Second, to acquire new information and work like a human brain.

Thus, a computer needs to be transformed into a cognition machine.

Did you know that our brain uses roughly 20 watts of power on average, which is about half that of a standard laptop?

How Does Neuromorphic Computing Work?

Traditional neural network and machine learning algorithms are best suited for existing algorithms. They either provide fast computation or focus on using low power and there is always a trade-off between the what to achieve. Neuromorphic systems perform fast computation while consuming less power consumption. Additionally, they also resemble human brain as they have the following features:

- They are massively parallel, to handle multiple tasks at once.

- They are event-driven, as they respond to events dynamically based on changing environmental conditions.

- They are economical in terms of power used.

- They are flexible as they are highly adaptable and are able to generalize.

- They are strong and fault-tolerant, since they produce results even when some component(s) have failed.

Neuromorphic computing achieves this brain-like function and efficiency by building artificial neural systems that implement ‘neurons’ (nodes that process information) and ‘synapses’ (the connections between those nodes) to transfer electrical signals using analog circuitry. This structure controls the amount of electricity being passed from one node to another to mimic the varying degrees of strength that usually occurs in a human brain. Such a system of neurons and synapses that transmit these electric pulses is known as a spiking neural network (SNN). This facility was not available in traditional neural networks (that uses digital signals).

Neuromorphic systems deviate from the Von Neumann architecture and introduce a new chip architecture that collocates memory and processing together on each individual neuron. Hence, there is no need to have separate memory unit (MU), central processing unit (CPU) and data paths. Having separate parts requires information to be repeatedly moved back and forth between different components to complete a given task, creating a bottleneck for time and energy efficiency. This is known as the von Neumann bottleneck.

By bringing memory on the neuromorphic chip, information can be processed much more efficiently, thereby making the chip more powerful and efficient.

Neuromorphic Computing and Artificial General Intelligence (AGI)

We have seen that the term ‘artificial general intelligence’ (AGI) exhibits intelligence equal to that of humans has not yet been achieved and may not even be reached with the traditional computers. But with neuromorphic computing, researchers are feeling optimistic about it.

Example: The Human Brain Project, featuring the neuromorphic supercomputer SpiNNaker produces a functioning simulation of the human brain and is one of the hottest research projects in AGI.

A machine is said to achieve AGI when the machine can do the following:

- Reason and make judgments in an uncertain situation

- Plan

- Learn

- Communicate using natural language

- Represent knowledge

- Integrate the above skills to fulfil a goal

An AGI may even have the ability to imagine, gain from experience and be self-aware. To confirm whether a system has developed AGI or not, it can be made to pass the Turing Test or the Robot College Student Test, in which a machine enrolls in classes and obtains a degree like a human would.

Debatable Issue

Even if a machine develops human intelligence, then a question rises that whether it should be handled ethically and legally. While some researchers argue that it should be treated as a nonhuman animal in the eyes of the law, others say that still a lot has to be done to inculcate consciousness in machines.

Comparing Human Brain with Neuromorphic Computing

Brains need very less energy than most supercomputers. While a human brain uses about 20 watts, the Fugaku supercomputer needs 28 megawatts (about 0.00007% of Fugaku’s) power supply.

Supercomputers need elaborate cooling systems but our brain sits at 37°C in between bones.

Supercomputers can make complex millions and billions of complex calculations in second(s) but the human brain is very adaptable. A single brain can write poetry, identify a familiar face out of a crowd, drive a car, learn a new language, instantly take decisions, respond to events and do much more.

While traditional computers designed on von Neumann architecture are largely serial, brains use massively parallel computing. Brains are also more fault-tolerant than computers. Therefore, harnessing techniques used by a human brain will be the key to making more powerful neuromorphic computers in the future.

So How Can You Make a Computer That Works Like the Human Brain?

To understand how neuromorphic computers work, let us first recapitulate how the brain works.

When we feel hot or cold, neurons (a type of nerve cell) carry messages to the brain. To transfer messages, several neurons release chemicals across a gap called a synapse. This happens until the message reaches the brain. Once the brain identifies the sensation, it sends an appropriate message to the point which felt the sensation using neurons.

An action potential is triggered either through sending multiple inputs at once (spatial), or by building multiple inputs over time (temporal). The brain transfers information quickly and efficiently through the huge interconnectivity of synapses where one synapse might be connected to 10,000 other synapses.

Neuromorphic computers work like human brains using spiking neural networks (SNN). While transistors used in a conventional computing can be either on (1) or off (0), SNN can produce more than one outputs and transfers information in temporal and spatial way as the human brain does.

Neuromorphic systems can be either digital or analogue. Synapses are controlled either by software or memristors. Memristors can store a range of values instead of storing just one and zero. Like the human brain, they can vary the strength of a connection between two synapses to allow the brain-based systems to learn.

Uses of Neuromorphic Systems

As of now, to perform heavy computational tasks, devices like smartphones hand off processing to a cloud-based system. The cloud processes the query and sends the output to the device. This scenario will change with neuromorphic systems. In such a system, instead of passing queries and responses back and forth, computations would be done within the device itself.

The current generation AI is rules-based. It is extensively trained on datasets to generate a particular outcome. However, the human brain does not work like this. Researchers are trying hard to make the next generation of artificial intelligence deal with more brain-like problems. For example, constraint satisfaction, in which a system has to find the optimum solution to a problem with a lot of restrictions.

Neuromorphic systems also perform well with noisy and uncertain data.

Examples of Neuromorphic Computer Systems Used Today

64 Intel’s neuromorphic chips named Loihi are used to make an 8 million synapse system called Pohoiki Beach, consisting of 8 million neurons (may soon reach 100 million neurons in the near future). They are being used to create artificial skin and also develop powered prosthetic limbs.

IBM’s neuromorphic system, TrueNorth, launched in 2014, consists of 64 million neurons and 16 billion synapses. IBM in a partnership with the US Air Force Research Laboratory is trying to create a ‘neuromorphic supercomputer’ known as Blue Raven for creating smarter, lighter, less energy-demanding drones.

The EU-funded Human Brain Project (HBP), a 10-year project that started in 2013, has led to two major neuromorphic initiatives, SpiNNaker and BrainScaleS. In 2018, the largest neuromorphic supercomputer of that time, a million-core SpiNNaker system went live. It is hoped that this model would soon scale up to one million neurons. BrainScaleS also has a similar goal and its architecture is now on its second generation, BrainScaleS-2.

Challenges to Using Neuromorphic Systems

Shifting from Von Neumann to neuromorphic computing is not that easy and straight-forward. For example, when analysing visual input, traditional systems treat them as a series of individual frames, but a neuromorphic processor would encode this information as changes in a visual field over time.

New programming languages need to be developed to utilize the change in the underlying architecture. New kind of memory, storage and sensor technology need to be created to take full advantage of neuromorphic devices. This may even change the entire process of creating and integrating hardware and software components.

Leave a Reply