Category: 3. Machine Learning

-

-

Conclusion

These algorithms can get complicated and do require strong technical skills. But it is important to not get too bogged down in the technology. After all, the focus is to find ways to use machine learning to accomplish clear objectives. Again, Stich Fix is a good place to get guidance on this. In the November issue of…

-

K-Means Clustering (Unsupervised/Clustering)

The k-Means clustering algorithm, which is effective for large datasets, puts similar, unlabeled data into different groups. The first step is to select k, which is the number of clusters. To help with this, you can perform visualizations of that data to see if there are noticeable grouping areas. Here’s a look at sample data,…

-

Ensemble Modelling (Supervised Learning/Regression)

Ensemble modelling means using more than one model for your predictions. Even though this increases the complexity, this approach has been shown to generate strong results. To see this in action, take a look at the “Netflix Prize,” which began in 2006. The company announced it would pay $1 million to anyone or any team that…

-

Decision Tree (Supervised Learning/Regression)

No doubt, clustering may not work on some datasets. But the good news is that there are alternatives, such as a decision tree. This approach generally works better with nonnumerical data. The start of a decision tree is the root node, which is at the top of the flow chart. From this point, there will be a tree of decision paths,…

-

Linear Regression (Supervised Learning/Regression)

Linear regression shows the relationship between certain variables. The equation—assuming there is enough quality data—can help predict outcomes based on inputs. Example: Suppose we have data on the number of hours spent studying for an exam and the grade. See Table 3-6. Table 3-6. Chart for hours of study and grades Hours of Study Grade Percentage 1 0.75 1…

-

K-Nearest Neighbor (Supervised Learning/Classification)

The k-Nearest Neighbor (k-NN ) is a method for classifying a dataset (k represents the number of neighbors). The theory is that those values that are close together are likely to be good predictors for a model. Think of it as “Birds of a feather flock together.” A use case for k-NN is the credit score, which is…

-

Naïve Bayes Classifier (Supervised Learning/Classification)

Earlier in this chapter, we looked at Bayes’ theorem. As for machine learning, this has been modified into something called the Naïve Bayes Classifier. It is “naïve” because the assumption is that the variables are independent from each other—that is, the occurrence of one variable has nothing to do with the others. True, this may…

-

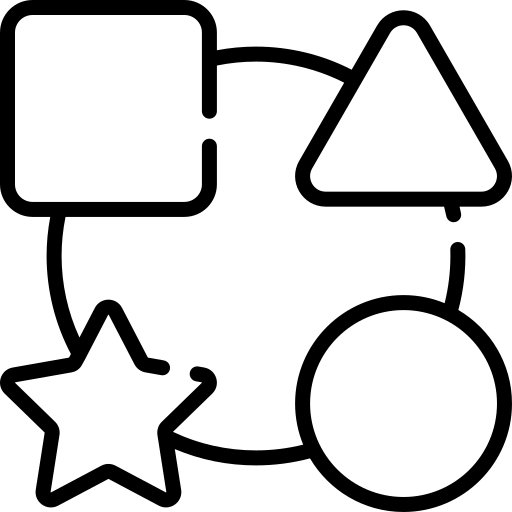

Common Types of Machine Learning Algorithms

There is simply not enough room in this book to cover all the machine learning algorithms! Instead, it’s better to focus on the most common ones. In the remaining part of this chapter, we’ll take a look at those for the following: Figure 3-3 shows a general framework for machine learning algorithms.

-

Applying Algorithms

Some algorithms are quite easy to calculate, while others require complex steps and mathematics. The good news is that you usually do not have to compute an algorithm because there are a variety of languages like Python and R that make the process straightforward. As for machine learning, an algorithm is typically different from a traditional…